|

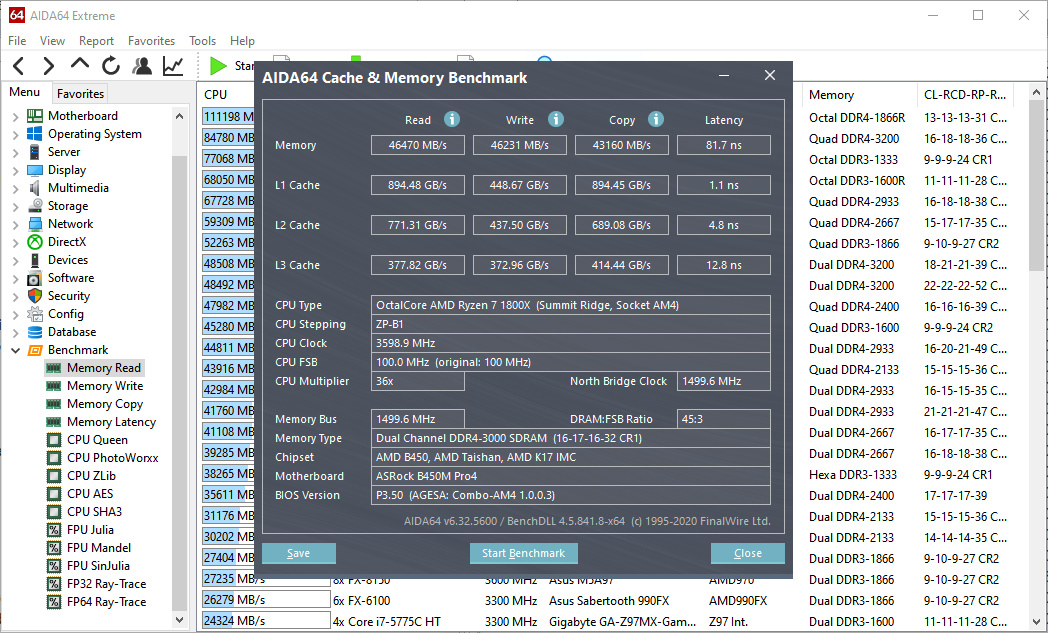

“I'm very excited to try things out and I think it will be beneficial for the community,” he says. The fact that LLaMA 2 is an open-source model will also allow external researchers and developers to probe it for security flaws, which will make it safer than proprietary models, Al-Dahle says. Nevertheless, Meta’s commitment to openness is exciting, says Luccioni, because it allows researchers like herself to study AI models’ biases, ethics, and efficiency properly. Meta says it did not remove toxic data from the data set, because leaving it in might help LLaMA 2 detect hate speech better, and removing it could risk accidentally filtering out some demographic groups. The company says it did not use Meta user data in LLaMA 2, and excluded data from sites it knew had lots of personal information.ĭespite that, LLaMA 2 still spews offensive, harmful, and otherwise problematic language, just like rival models. Al-Dahle says there were two sources of training data: data that was scraped online, and a data set fine-tuned and tweaked according to feedback from human annotators to behave in a more desirable way. SkillBench (space shooter) tests user input accuracy. RAM tests include: single/multi core bandwidth and latency. UserBenchmark will test your PC and compare the results to other users with the same components. Drive tests include: read, write, sustained write and mixed IO. Compare results with other users and see which parts you can upgrade together with the expected performance improvements.

GPU tests include: six 3D game simulations. The model was trained on 40% more data than its predecessor. - CPU tests include: integer, floating and string.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed